Fun Facts (Hints)

Mathematics has an extensive history in just about every culture or civilized society known to man. Listed below are some cool facts to get those math juices flowing:

In 1852 mathematician JJ Sylvester established the "theory of algebraic invariants."

In 1995 math great Andrew Wiles, proved "Fermat's Last Theorem."

The first decimal number system is said to have been invented in Ancient Egypt around 5000BC.

The Ancient Mayan civilization located in Central America is one of the only cultures in the recorded history of the world to use a place-value number system.

The American Mathematical Society was formed in the late months of 1888 in New York.

Trending Tags

Feel free to use content on this page for your website or blog, we only ask that you reference content back to us. Use the following code to link this page:

Terms · Privacy · Contact

Riddles and Answers © 2021

Adding Eights Riddle

Hint:

Green And Red Apple Trees Riddle

One of the apple trees had only green apples, and the other tree had only red apples. The village boys picked all the apples from both trees, and found that there were 5 red apples for every 4 green apples. Between them, the boys then ate 16 red apples and 16 green applies. When they counted the apples that were left, they found there were 3 red apples for every 2 green apples. How many apples of each color were on the trees in the first place?

Hint:

Saving Money Riddle

Titus Scribner told his family that each month they would save twice as much as they had saved in the previous month. They would save$1 in the first month, $2 in the second month, and so on. How much money will they have saved at the end of the year?

Hint:

Guilders Expense Riddle

While building a medieval cathedral, it cost 37 guilders to hire 4 artists and 3 stonemasons or 33 guilders for 3 artists and 4 stonemasons. What would the expense of just one of each?

Hint:

Ten guilders. An artist cost 7 guilders, and a stonemason cost 3 guilders.

Did you answer this riddle correctly?

YES NO

Did you answer this riddle correctly?

YES NO

Shotgun Pete Riddle

Shotgun Pete owned a lot of guns. He left a quarter of them in Death Valley, gave one to each of the three passengers in the stagecoach, and kept half of them with him. How many guns did Pete own?

Hint:

One Third As Old Riddle

Hint:

Add Up To 100 Riddle

With the numbers 123456789, make them add up to 100. They must stay in the same order. You can use addition, subtraction, multiplication, and division. Remember, they have to stay in the same order!

Hint:

How Many Spaces Riddle

Hint:

64; the space that comes after the 64th spoke, would be the first spoke. Did you answer this riddle correctly?

YES NO

YES NO

The Bus Stop Riddle

An empty bus pulls up at a stop and 5 people get on. At the next stop 5 people get off and twice as many people get on as at the first stop. At the third stop 10 people get off.

How many people are on the bus at this point?

How many people are on the bus at this point?

Hint:

Ne, the bus driver.

On the 1st stop, 5 people get on. On the 2nd stop, 5 people get off and 10 (twice as many as at 1st stop) people get on. On the 3rd stop, 10 people get off. So there are (5 5 + 10 10) 0 passengers left, which leaves the bus driver as the only person on board the bus. Did you answer this riddle correctly?

YES NO

On the 1st stop, 5 people get on. On the 2nd stop, 5 people get off and 10 (twice as many as at 1st stop) people get on. On the 3rd stop, 10 people get off. So there are (5 5 + 10 10) 0 passengers left, which leaves the bus driver as the only person on board the bus. Did you answer this riddle correctly?

YES NO

Three Rivers Riddle

There are three rivers and after each river lies a grave. A man wants to leave the same number of flowers at each grave and be left with none at the end. However, each time he passes through a river, the number of flowers he has doubles. How many flowers does he have to start with so that he is left with none at the end? And how many does he leave at each grave?

Hint:

This problem has an infinite number of solutions modeled by the equation 8a=7n, where a is the amount of flowers the man starts with and n is the number of flowers he leaves at each grave. The simplest and possibly trivial solution would be to start with 0 flowers and leave 0 flowers at each grave. A more significant solution would be to start with 7 flowers and leave 8 at each grave. Any positive integer multiple of this solution also satisfies the conditions. For example, the man starts with 14 flowers and leaves 16 at each grave; so, 14 doubles to 28, and 28-16= 12; 12 doubles to 24, and 24-16= 8; 8 doubles to 16, and 16-16= 0. The result is the same if the man starts with 21 flowers and leaves 24 flowers at each grave, or starts with 28 and leaves 32. Did you answer this riddle correctly?

YES NO

YES NO

Farmer Stones Riddle

A farmer had a stone that he used to measure grain on his scale. One day his neighbor borrowed the stone, and when he returned, it was broken into four pieces. The neighbor was very apologetic, but the farmer thanked the neighbor for doing him a big favor. The farmer said that now he can measure his grain in one pound increments starting at one pound all the way to forty pounds (1, 2, 3, 17, 29, 37, etc.) using these four stones.

How much do the four stones weight?

How much do the four stones weight?

Hint:

The stones weight 1 pound, 3 pounds, 9 pounds and 27 pounds. These can be used in combination with each other on both sides of the scale to come up with any counterweight from 1 to 40 pounds. Did you answer this riddle correctly?

YES NO

YES NO

A Farmers Sheep Riddle

Hint:

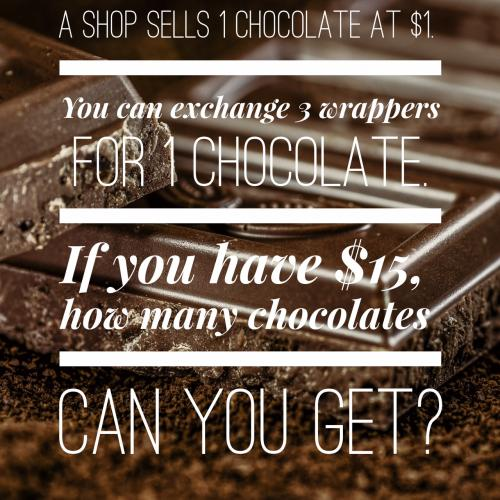

How Many Chocolates Can You Get?

A shop sells 1 chocolate at $1. You can exchange 3 wrappers for 1 chocolate. If you have $15, how many chocolates can you get?

Hint:

15 chocolates with resale. 15 then with 15 wrappers you get 5 more chocolates. With 3 wrappers from the 5 new chocolates you get 1 more chocolate. And with these 1 more wrapper and the remaining 2 wrappers you get 1 more chocolate. Hence you get total 15 + 5 + 1 + 1 = 22 Did you answer this riddle correctly?

YES NO

YES NO

The Jacksons Wedding Day Riddle

When the Jacksons married 18 years ago, Mr. Jackson was three times as old as his wife, and today he is just twice as old as she?

How old was Mrs. Jackson on the wedding day?

How old was Mrs. Jackson on the wedding day?

Hint:

Mr. Jackson was 54 and his wife was 18. Now hes 72 and his wife 36. Did you answer this riddle correctly?

YES NO

YES NO

Two Girls On A Train

Two schoolgirls were traveling from the city to a dacha (summer cottage) on an electric train.

"I notice," one of the girls said "that the dacha trains coming in the opposite direction passes us every 5 minutes. What do you think-how many dacha trains arrive in the city in an hour, given equal speeds in both directions?"

"Twelve, of course," the other girl answered, "because 60 divided by 5 equals 12."

The first girl did not agree. What do you think?

"I notice," one of the girls said "that the dacha trains coming in the opposite direction passes us every 5 minutes. What do you think-how many dacha trains arrive in the city in an hour, given equal speeds in both directions?"

"Twelve, of course," the other girl answered, "because 60 divided by 5 equals 12."

The first girl did not agree. What do you think?

Hint:

If the girls had been on a standing train, the first girl's calculations would have been correct, but their train was moving. It took 5 minutes to meet a second train, but then it took the second train 5 more minutes to reach where the girls met the first train. So the time between trains is 10 minutes, not 5, and only 6 trains per hour arrive in the city. Did you answer this riddle correctly?

YES NO

YES NO

Post Your Math Brain Teasers Below

Can you come up with a cool, funny or clever Math Brain Teasers of your own? Post it below (without the answer) to see if you can stump our users.